Turbocharging LLMs for Scientific Discovery

Date:

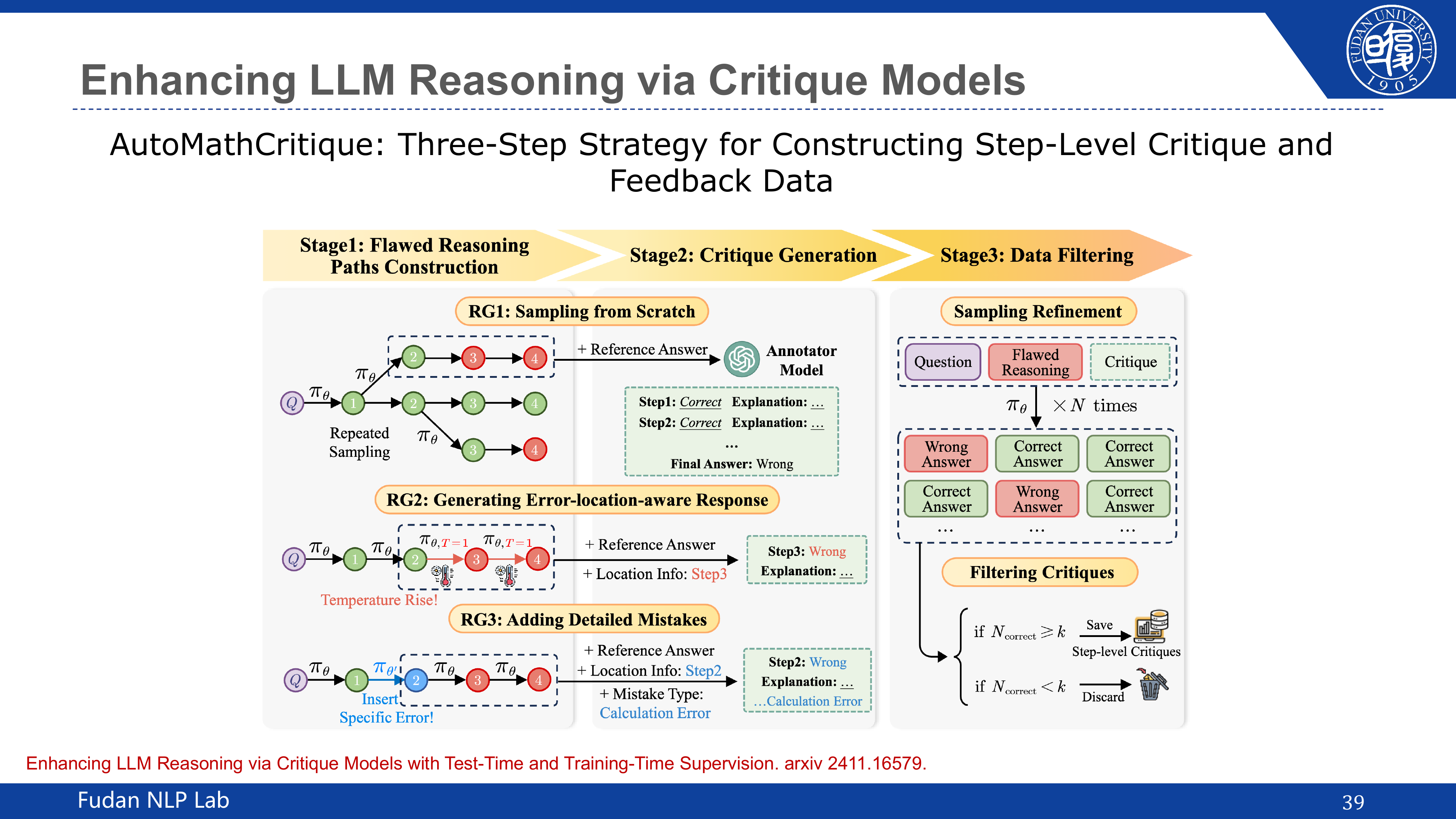

AI is revolutionizing scientific discovery, as highlighted by recent Nobel Prizes in Physics and Chemistry awarded to AI-related breakthroughs. This talk explores how large language models (LLMs) can turbocharge scientific research across the full discovery pipeline — from data analysis and hypothesis generation to experimental design. We begin with an overview of LLMs and their emergent abilities, then examine their growing capabilities in reading comprehension, coding, and complex reasoning. A key focus is on enhancing LLM reasoning for science, covering techniques such as Chain-of-Thought prompting, self-consistency, process supervision, and critique models. We further discuss agent-based modeling and simulation (ABMS) as a powerful paradigm for studying complex systems, and present recent advances in multimodal multi-agent systems for scientific tasks, including PhysicsMinions for physics olympiad problem solving and AtomAgents for AI-driven materials discovery.